The internet is a volatile place, SERP pages, even more so. Trends and algorithms change in search engines without warning, and if you are not adaptive enough, it won’t take long to fall off the first-page ranking.

An SEO audit will help you identify areas of improvement in your website that will ensure enhanced performance in the search engines. A comprehensive SEO audit, in the simplest of terms, is like a health check-up of your website, ensuring it remains search engine friendly.

During an SEO audit, your website is checked against a checklist of factors that affect ranking. While there are a number of tools available but a custom website audit is recommended as it is designed specifically to the needs of your website. Follow this guide to do a customized SEO audit of your website:

How to Perform an SEO Audit on Your Website:

The SEO audit checklist includes various steps but here we will start with a few important tests to conduct on your website step by step.

Conduct a Crawl Test:

A crawl test can be conducted using tools like Screaming Frog or Beam Us Up. These tools crawl your website similarly to how a search engine crawler does and help identify problems in website structure and SEO setup.

Alternatively, you can even develop your own website crawler that will specifically target the areas that are important to you.

In any case, a crawl report will include information about issues in your website that make it less crawler friendly. The crawl test will reveal all the broken URLs. These errors will be mentioned as HTTP status codes (4xx and 5xx HTTP status codes). While taking care of broken URLs, and if you find that one of the pages corresponding to a broken URL is not there on your website anymore, redirect it to a relevant replacement within your website.

For any redirection, ensure you are using a 301 permanent redirect. This way, you will be able to avoid any kind of dip in the search traffic, as all the links to the same page will be ranked according to the domain authority of your page, based on inbound links. For example, the links given below are both different, but when you click on them, they will take you to the same destination which is https://www.google.com:

- http://google.com

- https://google.com

Moreover, the URL to which the traffic is being directed enjoys the same search engine authority as either of them.

To make sure your audit is thorough, it is a good idea to register your website with webmaster tools for both Bing and Google. Here is all you need to know about these tools.

Similarly, you can also analyze traffic patterns to identify broken links or duplicate content. For instance, if a lot of your traffic bounces off your website after clicking a certain link, it might be broken.

Post a crawl test, the actual audit will begin. You will be auditing your website on a number of parameters. These can be divided into four broad categories:

- Visibility and accessibility of the website

- Index ability

- On-page ranking factors

- Off-page ranking factors

Let’s look at each of the categories in detail:

1. Visibility and Accessibility of the Website (Technical SEO Audit Checklist):

If your website is not visible to a search engine, it will not be visible to the people using the search engine, and if that is the case, it is just as good as not having a website.

Robots.txt

Robots.txt is a file embedded in the root of your website that is used to restrict search engines to crawl the pages that contain them. For instance, if you want your images not to be listed in search results, a robot.txt will prevent the search engine from ‘crawling’ them. While this might be useful sometimes, it can cause a lot of problems if this file is present on one of your major pages.

Webmaster tools work great at identifying URLs that are blocked. Once you have identified them, you can simply remove the file to ensure the pages are visible to a search engine crawler.

Meta Robot Tags

A meta robot tag is like an instruction to the crawler regarding the page being crawled. Different values of this tag dictate different actions. For instance, Google will index all pages where the meta robot tag value is set to “index”, and it will instruct the crawler to go through the links on the page, regardless of whether they can be indexed or not if the value is set to “follow”.

However, it is important to note that all search engines do not support the same set of values. This table shows which values are identified by major search engines:

This is a guide about what each of these values means.

XML Sitemaps

Search engines do not only rank websites, they rank web pages. This is where XML sitemaps step in. As the name suggests, an XML sitemap is a map of your website that the search engine crawlers will use as a guide while going through your website.

The sitemap generator by XML-sitemaps.com is a great tool that would automatically generate a sitemap for you. Once that is done, make sure the sitemap follows the standard sitemap protocol, and also that it mentions all the pages you found during the crawl test conducted earlier. Once this is done, it is time to upload the sitemap to your web server and relevant webmaster tools.

Website Architecture, Elements, & Performance

The term website architecture refers to the structure of the website, in terms of depth (number of pages) and the width of information present on the different pages. This is a crucial ranking factor. For instance, if the navigational elements, which are a part of website architecture, are made inaccessible by Flash or JavaScript, it will result in a traffic dip immediately.

Similarly, website architecture has a direct influence on the performance of your website. Users and search engines alike, have very limited time to browse your website. This means, if your website takes too much time to load, it would not be thoroughly crawled by the search engines, or by the users. You can evaluate the performance through tools like Google Page Speed, or for more comprehensive results, use Pingdom. These tools will display the pages, as well as specific items on those pages, that are affecting the performance of your website. Once you have identified these, you can start using relevant solutions like compression, or a Content Delivery Network for heavy elements on the pages.

Remember, search engine crawlers have become more intelligent, and optimizing for SEO has become synonymous with optimizing for humans. Keeping that in mind, while auditing your website architecture, manually go to your homepage, and count the number of clicks it takes you, to reach a page that you consider important. While it is important to make sure the most important pages are always the top priority in your architecture hierarchy, the ideal website should have content distributed on it uniformly, both vertically and horizontally.

2. Indexability:

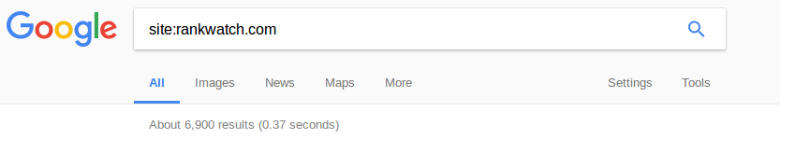

The first category of audits was to make sure that your website is visible to search engines. However, this still doesn’t necessarily mean it is being indexed by the search engine. The first step in this direction is to make sure your website and all the important pages are being indexed. To do this, type “site:” followed by the name of your website and look at the number of results.

While this number is far from accurate, comparing it against the number of pages in your sitemap will give you important insight. If the index count is significantly lower than the actual number, it means the search engine is not ranking a lot of your pages. If the case is the opposite, it usually suggests your website is serving duplicate content. If the number is somewhat similar, there is nothing to worry about.

Once you have made sure your website is being indexed, it is time to ensure the search engine is also indexing all the important pages of the website. Doing so constitutes of conducting two simple tests:

- Page Search Test: Type in the URL of your website and other important pages, and check if they show up in the results. If they don’t, check the accessibility of the page as we did in the previous section. If it checks out, chances are your page is being penalized.

- Brand Search Test: Enter the name of your business or brand in the search bar and check if your website and/or other pages are in the top results. Again, if this isn’t the case, your page might have been penalized.

If you think your website has been penalized, follow these steps to fix this:

Step 1. Ensure you are being penalized: Re-audit and re-check all the pages to make sure you have been penalized. In most cases, you will find a relevant message in your webmaster tools if your website is being penalized. If this is not the case, you might be a victim of an algorithmic update in the search engine.

Step 2. Fix issues: If you have received a notification in your webmaster tools, you know what needs to be fixed. However, in the case of an algorithmic update, you will have to find out what changes have been introduced. Updates in algorithms affect a lot of businesses and websites, so the information is usually available across popular SEO forums and news sites. You need to figure out why the website was penalized need and by fixing those issues, you can easily recover from Google penalties.

Step 3. Notify the search engine: In the case of updates in search engine algorithms, you might have to wait till it refreshes to see your website indexed once again. However, if you are being penalized, you can contact the search engine and request a re-evaluation of your website. Both Google and Bing offer clear instructions on how to go about this process.

3. On-Page Ranking Factors (On-Page SEO Audit):

After analyzing the visibility and indexability of your website and its pages, it is time to check your website for on-page ranking factors. The term “on-page ranking factors” refers to the elements and characteristics of your website and pages that influence SERP rankings. These are:

- URLs: A URL is responsible for bringing people to your website. A user will not click on the URL if they do not find it trustworthy. For that reason, your URL should be readable, short, and should have relevance to the content on the page. Moreover, a great URL will contain the relevant keywords, and would still be shorter than 115 characters. For separating words, it is best to use hyphens.

- Content: Content has been identified as one of the most important ranking factors. From titles to the content body itself, greatly influence the SEO performance of your website. Apart from proper formatting and impeccable grammar, it is extremely crucial to ensure that your content is unique. Here are a few things to keep in mind while auditing content:

- Uniqueness: Google and other search engines heavily penalize copied content. Tools like Copyscape are great when it comes to checking the uniqueness of your content. Moreover, it there is content duplication within your website, it may negatively affect your rankings. Make sure you get rid of these with 301 redirects.

- Quality: Ensure there are absolutely no spelling or grammatical errors on any of the pages. Such errors will compromise the professional credibility of your business. High-quality content is not only absent of errors, but it is also extremely reader-friendly and delivers real value to the reader at the same time.

- Keywords: There is no denying the importance of keywords in SEO. Therefore, it is imperative to include keywords on your page, preferably in the first few lines. On the other hand, spamming your content with keywords will bring your no results, whatsoever.

- Accessibility: Lastly, it is important to ensure that your content is accessible by search engines. To do so, ensure that you do not put your content in Flash or JavaScript. It is also important to make sure that multiple pages of your website are not targeting the same keywords. This confuses search engine crawlers and users alike.

- Internal Linking: Internal linking is extremely beneficial for your website as it helps in improving the rankings, and encourages users to spend more time on your website. Make sure all your pages contain between two and ten internal links, with the most important pages getting links from your homepage.

- Titles: Titles are the single most visible element of a web page. Titles are not only the first thing a user reads when they arrive on a page, but they are also displayed in the SERPs, and only an effective title will help you make sense of all the efforts you are devoting to SEO. Make sure your titles contain a keyword and are short, precise, and descriptive.

- Meta Descriptions: Meta descriptions, just like titles, are not just a ranking factor, but they greatly influence the click-through rates. Ideal meta descriptions have the following qualities:

- Short: It is best to keep your Meta Description under 170 characters. Anything longer than that is cut to 155 characters when displayed in Google SERPs.

- The right amount of keywords: While keywords are important, don’t over-optimize your Meta descriptions by stuffing a ton of keywords.

- Relevant: Relevant meta descriptions offer a peek inside the content of your page. In some cases, it is the deciding factor when it comes to clicking through on your link.

- Images: Images might be an important part of any web page, but search engines cannot read them. Instead of the image, the crawlers read the alt text and the filename. Ideally, both should be relevant to the image and should contain at least one keyword. Also, keep in mind that the size of an image file affects the page load time. If your images are too heavy, consider using a CDN.

- Outlinks: Links leading to reputed, authoritative websites from your website add to the credibility of your brand, as well as your website itself. However, a few things are to be kept in mind while out linking:

- Only link to authority websites

- Links should be relevant to the content on the page

- Ensure the presence of relevant anchor text

- Content-to-ads ratio: A lot of websites depend on ads to generate revenue. If yours is one of them, make sure you don’t have too many ads above the fold as it might be viewed as a penalty. In fact, having too many ads on your website will only frustrate the users, and drive them away from your website. Instead, maintain a healthy content-to-ads ratio, and place your ads strategically such that, they receive the maximum amount of attention, without interfering with the content on your website.

4. Off-Page Ranking Factors (Off-Page SEO Audit):

Unlike the previous categories, the off-page ranking factors for your website are not straightforward. These include:

- Backlinking profile analysis: Backlinking is an important part of SEO. However, gone are the days when ensuring you have a healthy number of backlinks was enough to land on the first page of SERPs. Nowadays, it is important to ensure you are linking to, and are being linked by reliable and authoritative websites. To make sure that you are doing the right linking it is important to use quality tools that can give you the best results. Linkio is a tool that calculates and gives you the right anchor text to use in your internal and external linking to bring you more organic traffic, beat your competitors and get in front of them in a fast time.

- Social Media: The world is going social, and if you have not created social media profiles for your business, now would be a great time to begin. This will provide you with invaluable social proof, along with an additional (and extremely important) source of traffic. Moreover, just like with backlinking, receiving recommendations from authority figures on social media can bring about amazing results.

- Responsive Web Design and Accelerated Mobile Pages: With more and more users shifting to mobile devices, it is more important than ever to have a responsive website. Moreover, search engines rank responsive websites and AMP pages higher than their non-responsive competition.

- Trustworthiness: Trust is an important aspect of the success of your SEO campaign. Building trust begins with ensuring your website is free of malware and spam. Safe Browsing API by Google is a great resource that you can refer to while checking your website for malware. Apart from this, the trustworthiness of your website depends on the quality of content, the use of keywords, and the other websites it is linking to.

Conclusion:

Once you are done with the audit, it is time to present your findings in an actionable manner. When you are preparing your audit report, make sure you present the most critical issues first. Most importantly, a good audit report comes with actionable guidelines to improve performance.

FAQs on SEO Audit:

A website SEO audit is a comprehensive analysis of a website’s SEO performance, including technical and on-page optimization factors. It helps identify areas for improvement and provides recommendations for optimizing the website for better search engine visibility & ranking.

A website SEO audit is important because it helps to identify any technical or on-page issues that may be hindering the website’s search engine visibility & ranking. By identifying and addressing these issues, a website can improve its search engine performance & drive more traffic & conversions.

Common issues that a website SEO audit may identify include broken links, duplicate content, poor keyword usage, slow page loading times, and lack of mobile optimization.

It is recommended to conduct a website SEO audit at least once a year, but it’s also important to keep an eye on your website’s performance & make changes as necessary.

Yes, you can conduct a website SEO audit yourself using various online tools and resources; however, it can be beneficial to work with an experienced SEO professional to ensure a thorough & accurate audit and to help implement the recommended changes.

Hey Vaibhav, enjoyed you article, it provides great value.

I’d like to ask you a question and I’d really appreciate if you take the time to answer it.

You mentioned images play a huge role of any web page. What are your thoughts on videos? How much of a role do they play according to you?

Kind regards,

Filip

I am glad you liked it, Filip.

To answer your question, you should always embed videos on your website via third-party hosting services like YouTube, Vimeo or Wista rather than self hosting them.

If you’re uploading the videos through your own web server, make sure use GZIP compression.