Imagine trusting your colleague to write a legal brief, and you find out later that they invented half the case citations. That’s not a hypothetical anymore. The Deloitte report that was submitted to the Australian government was found to contain fabricated academic sources and a fake court quote. The cost? A $440,000. The culprit? And uncontrolled AI output.

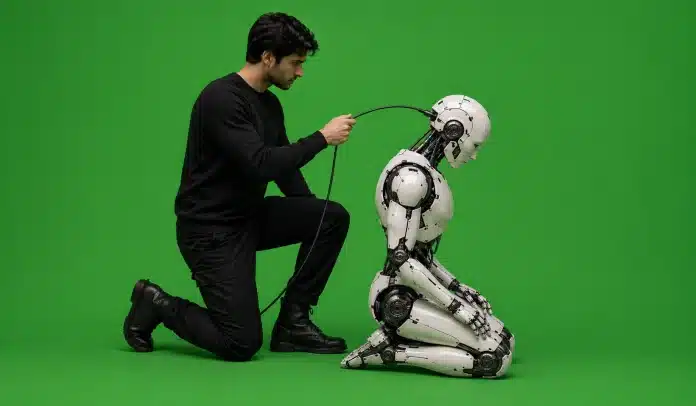

That story captures, more vividly than any definition could, why controlling the output of generative AI systems is so important. This isn’t a niche concern for machine learning researchers.

It affects every business, developer, student, and professional who uses AI in their work, which, at this point, is most of us. So let’s break it down properly.

What Is the Main Goal of Generative AI?

Before we talk about control, let’s get clear on what generative AI is actually trying to do. The main goal of generative AI is to produce new, useful content like text, images, audio, and code based on patterns learned from massive datasets. It’s genuinely impressive.

ChatGPT can write a policy memo. Midjourney is an excellent example of generative AI producing studio-quality images from a three-word prompt. Claude Code writes functional code in seconds.

But here’s the thing: generating output and generating correct, safe, appropriate output are two very different problems. The model has no built-in conscience. No fact-checker running in the background. No sense of shame when it confidently invents something that doesn’t exist.

That gap between what generative AI can produce and what it should produce is exactly what output control is designed to close.

The Hallucination Problem Is Worse Than You Think

People often assume AI hallucination is a rare glitch. But, on complex open-domain queries, hallucination rates in leading models can exceed 33%, according to Stanford HAI research. OpenAI’s o3 reasoning model, one of the most advanced available, hallucinated 33% of the time on the PersonQA benchmark.

Legal queries are even grimmer: LLMs hallucinate between 69% and 88% of the time on specific legal questions, according to the Stanford RegLab. In healthcare domains, the stakes are higher still, with ECRI listing AI risks as the number one health technology hazard for 2025.

The business impact is real and quantifiable. A Deloitte survey found that 47% of enterprise AI users made at least one major business decision based on hallucinated AI content in 2024. Global losses attributed to AI hallucinations reached $67.4 billion that year.

This is why controlling the output of generative AI systems isn’t just an ethical preference; it’s a financial and operational necessity.

What Happens Without Output Control?

The dangers of AI go far beyond embarrassing typos. Uncontrolled generative AI output creates at least four categories of serious risk:

- Misinformation at scale: A single poorly-controlled AI system can generate thousands of pieces of inaccurate content in minutes. In just the first quarter of 2025, 12,842 AI-generated articles were removed from online platforms due to hallucinated content (Content Authenticity Coalition, 2025). That’s not a content moderation problem it’s a structural one.

- Bias amplification: Generative AI learns from human-created data, which means it inherits human biases on a massive scale. MIT Sloan research has documented AI systems producing content that perpetuates gender and racial stereotypes. Without filtering mechanisms, these biases don’t just persist; they compound, because AI-generated content gets fed back into future training datasets.

- Security and privacy risks: AI systems can generate content that exposes sensitive information, facilitates phishing, or produces convincing deepfakes for social engineering. The question of how generative AI has affected security is increasingly urgent for businesses managing customer data. Protecting that data starts well before AI enters the picture, sound network security practices form the foundation that any responsible AI deployment needs to build on.

- Legal and regulatory exposure: GDPR, the EU AI Act, and India’s emerging AI governance frameworks all carry liability implications for organisations that deploy uncontrolled AI. “We didn’t know what the model would output” is not a legal defence that holds up particularly well.

So What Does “Responsible AI” Actually Mean?

Responsible AI, at its core, means building and deploying AI systems that are accurate, fair, transparent, and accountable. The four key principles of responsible AI, as defined by frameworks from organisations like Microsoft and Google, typically include: fairness, reliability, privacy, and accountability. These aren’t philosophical abstractions; they translate into concrete engineering decisions.

The responsibility of developers using generative AI runs deep here. Developers can’t simply release a model and hope for the best. They’re responsible for what that model produces, including the unintended outputs. That means building guardrails, filtering layers, human review processes, and feedback mechanisms from the start.

Fair and responsible AI for consumers is equally important from the user side. If you’re using ChatGPT to draft content for your business, or Gemini to summarise a legal document, you bear some responsibility for verifying what the model produces before you act on it or publish it.

How Do AI Governance Frameworks Actually Control Output?

Let’s look at the practical mechanisms, because this is where it gets interesting.

Prompt engineering and system prompts.

The most immediate lever is how the AI is instructed in the first place. System prompts instructions given to the model before any user interaction set the boundaries of what the model should and shouldn’t do. A well-designed system prompt can dramatically reduce off-topic, harmful, or misleading output. This is why prompt engineering has become a great professional skill.

Retrieval-Augmented Generation (RAG).

RAG grounds AI responses in verified external documents rather than letting the model free-associate from training data. Research shows RAG can reduce hallucination rates by 40–71%, making it one of the most effective control mechanisms available.

Human-in-the-loop review.

76% of enterprises now include human review processes before AI-generated content is deployed (IBM AI Adoption Index, 2025). This isn’t a sign of AI failure, it’s a sign of mature, responsible deployment.

Output filtering and content moderation layers.

Most production AI systems run outputs through a secondary filtering model that checks for harmful, biased, or off-policy content before it reaches the user. Think of it as quality control on a factory floor. The primary machine does the heavy lifting, but a secondary check catches what slips through.

AI governance frameworks.

An AI governance framework is a structured policy document (and set of technical controls) that defines how AI is used within an organisation, what’s permitted, what requires review, how errors are reported, and who’s accountable. Companies like IBM and Google publish their own frameworks; regulators in the EU, UK, and increasingly India are beginning to mandate similar structures at the national level.

What Type of Data Is Generative AI Most Suitable For?

Here’s a dimension that doesn’t get discussed enough. What type of data is generative AI most suitable for? Unstructured data, natural language, images, and audio are where it genuinely excels. Structured, factual, or highly precise data (clinical records, financial calculations, legal precedents) is where uncontrolled output is most dangerous.

This matters because many organisations are deploying generative AI in domains it isn’t well-suited for without the appropriate controls in place. Using ChatGPT to brainstorm a marketing campaign? Relatively low stakes, moderate oversight needed. Using it to draft medical guidance or financial disclosures? The control requirements are categorically different.

One thing current generative AI applications cannot do reliably is reason about truly novel situations without a structured knowledge base to draw from. Knowing that limitation is the first step toward deploying AI responsibly.

What Can Be Done: A Realistic Checklist

You don’t need a machine learning PhD to start controlling AI outputs more effectively. Here’s what actually works in practice:

- Always verify AI-generated facts before publishing or acting on them, especially in high-stakes domains

- Use structured prompts; vague inputs produce vague (and often inaccurate) outputs

- Choose RAG-enabled tools for research and factual tasks (Perplexity AI is built around this approach)

- Keep humans in the loop for anything consequential, content moderation, legal review, and medical guidance

- Document your AI use and maintain a record of what was generated, reviewed, and approved

If you run an online store, this is especially relevant. AI chatbots in ecommerce are a prime example of where controlled, well-supervised output directly affects customer trust and conversion. These aren’t limitations on what AI can do for you. They’re the things that make AI actually useful over time.

The Bigger Picture: Why This Matters for Everyone

AI governance isn’t just a concern for large enterprises or government regulators. If you’re a freelancer using Claude to write client copy, a student using Gemini to research an essay, or a startup founder using GPT-4 to draft legal templates, you’re in the generative AI ecosystem.

Students are a big part of this shift too, and the role of technology in education is evolving fast enough that understanding AI output quality has become a genuinely useful skill in academic settings. And the quality of what you produce depends, in part, on how well you understand and manage the output.

Sam Altman (CEO of OpenAI) acknowledged this directly: people have a very high degree of trust in ChatGPT, which makes the accuracy problem more dangerous, not less, because the confident tone of AI output masks uncertainty that’s often still there.

The good news? Hallucination rates are declining. Gemini 2.0 Flash now achieves a hallucination rate as low as 0.7% on summarisation benchmarks. RAG and structured prompting are genuinely effective. The tools to control generative AI output are improving faster than most people realise.

It’s worth noting that AI governance and output quality review are showing up in job descriptions now, roles that simply didn’t exist a few years ago, as this roundup of emerging job profiles makes clear.

But improvement at the model level doesn’t remove the responsibility at the user and developer level. Understanding why controlling the output of generative AI systems is important is, honestly, the first step toward sustainably using this technology, for your work, your organisation, and the people who ultimately rely on what you produce.

FAQs

AI hallucinations are instances where a generative AI model produces confident but factually incorrect or fabricated information. On complex queries, hallucination rates can exceed 33% even in leading models. A Deloitte survey found 47% of enterprise users made at least one major business decision based on hallucinated AI content in 2024.

Key ethical considerations include bias in AI outputs, privacy risks when sensitive data is used in prompts, the spread of misinformation, lack of transparency in how models make decisions, and the potential for AI to be used to generate harmful or deceptive content like deepfakes. Responsible use requires human oversight, clear governance policies, and regular output auditing.

Developers are responsible for what their AI systems produce, including unintended outputs. This means building content filters, guardrails, and human review processes, clearly documenting model limitations, and ensuring their systems comply with applicable regulations like the EU AI Act or GDPR.

Current generative AI cannot reliably reason about genuinely novel situations without a structured external knowledge base. This is why techniques like Retrieval-Augmented Generation (RAG), which grounds AI responses in verified documents, are so effective at reducing errors and hallucinations.

Generative AI has introduced new security risks, including AI-generated phishing content, deepfakes for social engineering, and the potential for models to inadvertently expose sensitive training data. These risks make output control and AI governance frameworks essential for any organisation deploying generative AI in customer-facing or data-sensitive environments.

An AI governance framework is a structured set of policies and technical controls that define how an organisation uses AI, what outputs are acceptable, how errors are reviewed, who holds accountability, and how compliance is maintained. Major regulators in the EU, UK, and India are increasingly mandating these frameworks for organisations deploying AI at scale.