You’ve probably been there. You open ChatGPT, type something like “write me a product description”, and what comes back is… fine. Generic. Forgettable. The kind of copy that could describe literally any product on any planet.

But here’s the thing: the AI didn’t fail you. Your prompt did. This is why purpose of prompt engineering in gen ai systems are really important.

That’s what makes prompt engineering such a game-changer right now. It’s not about coding skills or having a computer science degree. It’s about learning how to talk to AI systems in a way that actually gets results. And once you understand the purpose of prompt engineering in gen AI systems, you’ll never look at a blank ChatGPT window the same way again.

So, What Exactly Is Prompt Engineering?

Let’s keep this simple before we go deeper. Prompt engineering is the practice of designing and refining the instructions you give to a generative AI model so it produces accurate, relevant, and useful outputs.

Think of it like this: you’re a chef, and the AI is your kitchen assistant. If you say “make something,” you’ll get something. But if you say “make a spicy Kerala-style fish curry with coconut milk, medium heat, ready in 30 minutes,” you’ll get exactly what you wanted. Same assistant. Wildly different result.

That’s what a well-crafted prompt does. It removes ambiguity and gives the model just enough context to do its job well.

You may also like: Will AI Replace Programmers? Here’s What Actually Data Says

What Is the Purpose of Prompt Engineering in Gen AI Systems?

This is the real question, isn’t it? Why does it even matter how you phrase a prompt? Isn’t the AI supposed to figure that out? Not quite. And understanding why changes everything about how you use these tools.

To Improve Output Quality

The most obvious purpose: better prompts produce better outputs. When you give a Gen AI model like ChatGPT, Claude or Gemini a vague instruction, it fills in the gaps with statistical guesswork. The result tends to be broad, surface-level, and often inaccurate. A specific, structured prompt narrows those guesses down dramatically.

Here’s a quick comparison:

Weak prompt: “Write about climate change.”

Engineered prompt: “Write a 200-word explainer on how climate change affects monsoon patterns in India, for a class 10 student. Use simple language.”

Same AI. Completely different output. That’s the power of intent made explicit.

To Control Gen AI Behaviour, And Why That Matters

Why is controlling the output of generative AI systems so important? Because these models are enormously capable but fundamentally unpredictable without guidance. Left to their own devices, they can hallucinate facts, produce biased content, or go completely off-topic.

Prompt engineering acts as a guardrail. It shapes not just what the AI says, but how it says it, the tone, the structure, the depth, the perspective. For businesses using AI in customer service or content pipelines, this control is non-negotiable.

To Bridge the Gap Between Human Intent and Machine Response

Generative AI doesn’t read minds. It reads tokens, tiny chunks of text that it processes through billions of parameters. The main goal of generative AI is to produce content that’s genuinely useful to humans. But there’s a translation gap between what you mean and what you type.

Prompt engineering closes that gap. It’s essentially the art of communicating with a very intelligent, very literal machine.

To Reduce Hallucinations and Errors

One more thing, and this one surprises people. Structured prompts reduce AI hallucinations. When you give the model a clear role, a specific task, and defined constraints, you dramatically reduce the chances of it inventing information. It has less room to wander.

How Does Prompt Engineering Actually Work?

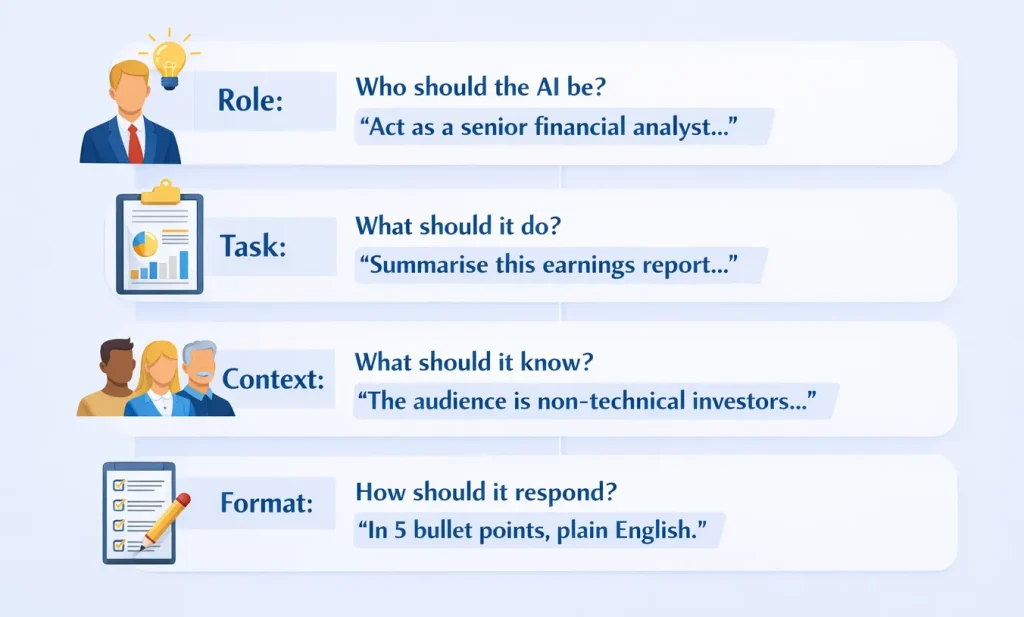

Let’s get really practical. Every well-engineered prompt has four basic components:

- Role: Who should the AI be? “Act as a senior financial analyst…”

- Task: What should it do? “Summarise this earnings report…”

- Context: What should it know? “The audience is non-technical investors…”

- Format: How should it respond? “In 5 bullet points, plain English.”

That’s it. You don’t need a PhD. You need structure. Apply this to ChatGPT, Claude, Gemini, or Microsoft Copilot — it works across all of them, though each model responds a little differently to the same prompt. Claude tends to be more nuanced with long-form content; Gemini handles real-time queries better. Experimenting is part of the process.

The Main Prompt Engineering Techniques (With Real Examples)

Honestly, most guides list these techniques like they’re items in a grocery store. Let me actually explain when and why you’d use each one.

Zero-Shot Prompting

No examples given, just a direct instruction. Best for simple, well-defined tasks. Like “List 5 disadvantages of remote work in bullet points.” Clean. Direct. Works great for summarisation, formatting, and basic Q&A.

Few-Shot Prompting

You give the model 2–3 examples before the actual task. This is brilliant for tone-matching and classification problems. “Here are two taglines I like: ‘Bold taste, zero compromise’ and ‘Made for the roads less driven.’ Now write three taglines for a sustainable sneaker brand in the same style.”

The AI now understands your taste, not just your task.

Chain-of-Thought (CoT) Prompting

In chain-of-thought (CoT) Prompting ask the AI to think out loud. Literally. Just add: “Let’s think step by step.” This technique dramatically improves accuracy for complex problems, logic puzzles, maths, and multi-step reasoning. It forces the model to break things down rather than jump to a conclusion.

Role Prompting

This one’s underrated. Setting a persona changes everything. “You are an experienced HR manager at a mid-size tech startup. A junior employee wants to negotiate a salary hike. How should they approach the conversation?” And suddenly, the output has context, nuance, and cultural relevance, not just generic career advice.

Iterative Prompting

Here’s the truth: your first prompt is rarely your best one. Iterative prompting means you treat the first response as a draft and keep refining. “Make it shorter.” “Add an example.” “Sound less corporate.” Each round gets you closer to what you actually need.

| Technique | Best For | Quick Example |

|---|---|---|

| Zero-shot | Simple, clear tasks | “Translate this to Hindi” |

| Few-shot | Style/tone matching | “Write like these examples…” |

| Chain-of-thought | Complex reasoning | “…let’s think step by step” |

| Role prompting | Contextual responses | “Act as a startup founder…” |

| Iterative | Refinement | “Make it more conversational” |

Where Is Prompt Engineering Actually Being Used?

Content teams at Indian startups are using engineered prompts to generate article content in multiple languages. Customer service platforms are running chatbots trained on carefully crafted system prompts to stay on-brand. Software developers are prompting AI to write, debug, and document code, a task that saved Indian IT firms enormous man-hours last year alone.

Many of these use cases align closely with how social media marketing works today, especially when it comes to automation and personalization.

In healthcare, the role of generative AI in drug discovery is growing. Researchers use prompt engineering to extract specific data patterns from medical literature that would take humans weeks to process. Edtech companies in India are building AI tutors that adapt to a student’s syllabus, grade, and learning pace, all driven by structured prompts.

If you can define a task clearly, prompt engineering can automate it. That’s the bottom line.

A Few Things to Keep in Mind When Prompting

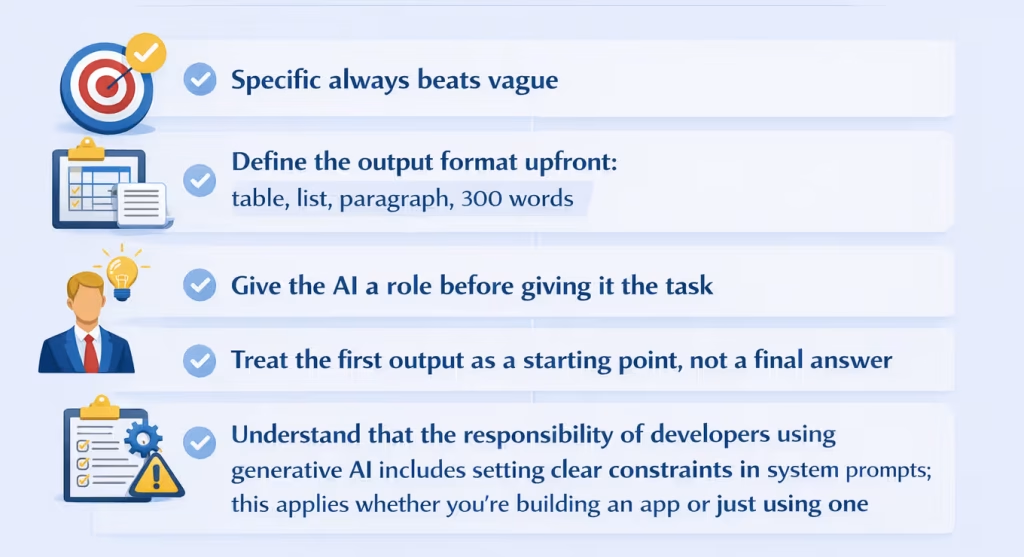

You don’t need a rulebook here. But a few habits make a real difference:

- Specific always beats vague

- Define the output format upfront: table, list, paragraph, 300 words

- Give the AI a role before giving it the task

- Treat the first output as a starting point, not a final answer

- Understand that the responsibility of developers using generative AI includes setting clear constraints in system prompts; this applies whether you’re building an app or just using one

One thing worth noting: what type of data is generative AI most suitable for? Unstructured data, text, images, audio. The more unstructured and creative your task, the more valuable good prompting becomes.

Is Prompt Engineering a Career in India?

Yes, and it’s growing faster than most people expected.

According to McKinsey’s Global Survey, 7% of organisations using AI are already hiring prompt engineers. In India, prompt engineering roles are appearing in job listings at edtech companies, AI startups, content agencies, and enterprise IT firms. Salaries range roughly from ₹4 LPA for entry-level roles to ₹18 LPA or more at product companies, depending on experience and the tools involved.

If you want to formalise your skills, IBM’s Generative AI Prompt Engineering course on Coursera is a solid starting point. Oracle and Google Cloud also offer generative AI certifications worth adding to a LinkedIn profile.

Conclusion

Prompt engineering isn’t a tech trend that’ll fade by next quarter. It’s fast becoming a core skill, like knowing how to use Google, but with far higher stakes and far more creative possibilities.

The purpose of prompt engineering in gen AI systems is simple at its heart: make these incredibly powerful tools actually work for you, reliably, every time. Whether you’re a student, a developer, a content creator, or a business owner in India trying to figure out how AI fits into your workflow, this is the skill that separates people who use AI from people who leverage it.

Start with one technique. Try role prompting on your next ChatGPT session. See what changes.

FAQs

Prompt engineering is the process of designing clear and specific instructions for AI tools like ChatGPT so they generate better results. Instead of giving vague commands, you guide the AI with context, role, and format.

Beginners can improve prompts by being specific, defining output format, giving context, and refining results iteratively. Treat the first output as a draft and keep improving the prompt.

An AI prompt generator is a tool that helps create structured prompts automatically. It simplifies the process for beginners and ensures consistent, optimized instructions for better AI outputs.

Prompt engineering reduces errors by adding clarity, constraints, and context. When instructions are precise, the AI has less room to guess or generate incorrect information.

An effective prompt is clear, specific, and structured. It defines the task, provides context, assigns a role if needed, and specifies the output format. The more precise your instructions, the better the AI response.

Yes, prompt engineering is widely used in business workflows such as content creation, customer support, data analysis, and automation. It helps improve efficiency and consistency.

Yes, structured prompts with clear constraints and context reduce hallucinations by limiting the AI’s tendency to guess or fabricate information.